Last week Apple made an announcement describing changes to the iCloud service for  users residing in mainland China. Beginning on February 28th, all users who have specified China as their country/region will have their iCloud data transferred to the GCBD cloud services operator in Guizhou, China.

users residing in mainland China. Beginning on February 28th, all users who have specified China as their country/region will have their iCloud data transferred to the GCBD cloud services operator in Guizhou, China.

Chinese news sources optimistically describe the move as a way to offer improved network performance to Chinese users, while Apple admits that the change was mandated by new Chinese regulations on cloud services. Both explanations are almost certainly true. But neither answers the following question: regardless of where it’s stored, how secure is this data?

Apple offers the following:

Apple has strong data privacy and security protections in place and no backdoors will be created into any of our systems”

That sounds nice. But what, precisely, does it mean? If Apple is storing user data on Chinese services, we have to at least accept the possibility that the Chinese government might wish to access it — and possibly without Apple’s permission. Is Apple saying that this is technically impossible?

This is a question, as you may have guessed, that boils down to encryption.

Does Apple encrypt your iCloud backups?

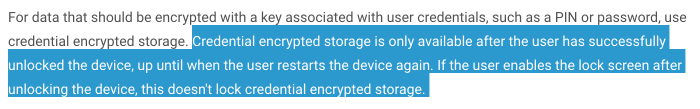

Unfortunately there are many different answers to this question, depending on which part of iCloud you’re talking about, and — ugh — which definition you use for “encrypt”. The dumb answer is the one given in the chart on the right: all iCloud data probably is encrypted. But that’s the wrong question. The right question is: who holds the key(s)?

There’s a pretty simple thought experiment you can use to figure out whether you (or a provider) control your encryption keys. I call it the “mud puddle test”. It goes like this:

Imagine you slip in a mud puddle, in the process (1) destroying your phone, and (2) developing temporary amnesia that causes you to forget your password. Can you still get your iCloud data back? If you can (with the help of Apple Support), then you don’t control the key.

With one major exception — iCloud Keychain, which I’ll discuss below — iCloud fails the mud puddle test. That’s because most Apple files are not end-to-end encrypted. In fact, Apple’s iOS security guide is clear that it sends the keys for encrypted files out to iCloud.

However, there is a wrinkle. You see, iCloud isn’t entirely an Apple service, not even here in the good-old U.S.A. In fact, the vast majority of iCloud data isn’t actually stored by Apple at all. Every time you back up your phone, your (encrypted)

data is transmitted directly to a variety of third-party cloud service providers including Amazon, Google and Microsoft.

And this is, from a privacy perspective, mostly** fine! Those services act merely as “blob stores”, storing unreadable encrypted data files uploaded by Apple’s customers. At least in principle, Apple controls the encryption keys for that data, ideally on a server located in a dedicated Apple datacenter.*

So what exactly is Apple storing in China?

Good question!

You see, it’s entirely possible that the new Chinese cloud stores will perform the same task that Amazon AWS, Google, or Microsoft do in the U.S. That is, they’re storing encrypted blobs of data that can’t be decrypted without first contacting the iCloud mothership back in the U.S. That would at least be one straightforward reading of Apple’s announcement, and it would also be the most straightforward mapping from iCloud’s current architecture and whatever it is Apple is doing in China.

Of course, this interpretation seems hard to swallow. In part this is due to the fact that some of the new Chinese regulations appear to include guidelines for user monitoring. I’m no lawyer, and certainly not an expert in Chinese law — so I can’t tell you if those would apply to backups. But it’s at least reasonable to ask whether Chinese law enforcement agencies would accept the total inability to access this data without phoning home to Cupertino, not to mention that this would give Apple the ability to instantly wipe all Chinese accounts. Solving these problems (for China) would require Apple to store keys as well as data in Chinese datacenters.

The critical point is that these two interpretations are not compatible. One implies that Apple is simply doing business as usual. The other implies that they may have substantially weakened the security protections of their system — at least for Chinese users.

And here’s my problem. If Apple needs to fundamentally rearchitect iCloud to comply with Chinese regulations, that’s certainly an option. But they should say explicitly and unambiguously what they’ve done. If they don’t make things explicit, then it raises the possibility that they could make the same changes for any other portion of the iCloud infrastructure without announcing it.

It seems like it would be a good idea for Apple just to clear this up a bit.

You said there was an exception. What about iCloud Keychain?

I said above that there’s one place where iCloud passes the mud puddle test. This is Apple’s Cloud Key Vault, which is currently used to implement iCloud Keychain. This is a special service that stores passwords and keys for applications, using a much stronger protection level than is used in the rest of iCloud. It’s a good model for how the rest of iCloud could one day be implemented.

For a description, see here. Briefly, the Cloud Key Vault uses a specialized piece of hardware called a Hardware Security Module (HSM) to store encryption keys. This HSM is a physical box located on Apple property. Users can access their own keys if and only if they know their iCloud Keychain password — which is typically the same as the PIN/password on your iOS device. However, if anyone attempts to guess this PIN too many times, the HSM will wipe that user’s stored keys.

The critical thing is that the “anyone” mentioned above includes even Apple themselves. In short: Apple has designed a key vault that even they can’t be forced to open. Only customers can get their own keys.

What’s strange about the recent Apple announcement is that users in China will apparently still have access to iCloud Keychain. This means that either (1) at least some data will be totally inaccessible to the Chinese government, or (2) Apple has somehow weakened the version of Cloud Key Vault deployed to Chinese users. The latter would be extremely unfortunate, and it would raise even deeper questions about the integrity of Apple’s systems.

Probably there’s nothing funny going on, but this is an example of how Apple’s vague (and imprecise) explanations make it harder to trust their infrastructure around the world.

So what should Apple do?

Unfortunately, the problem with Apple’s disclosure of its China’s news is, well, really just a version of the same problem that’s existed with Apple’s entire approach to iCloud.

Where Apple provides overwhelming detail about their best security systems (file encryption, iOS, iMessage), they provide distressingly little technical detail about the weaker links like iCloud encryption. We know that Apple can access and even hand over iCloud backups to law enforcement. But what about Apple’s partners? What about keychain data? How is this information protected? Who knows.

This vague approach to security might make it easier for Apple to brush off the security impact of changes like the recent China news (“look, no backdoors!”) But it also confuses the picture, and calls into doubt any future technical security improvements that Apple might be planning to make in the future. For example, this article from 2016 claims that Apple is planning stronger overall encryption for iCloud. Are those plans scrapped? And if not, will those plans fly in the new Chinese version of iCloud? Will there be two technically different versions of iCloud? Who even knows?

And at the end of the day, if Apple can’t trust us enough to explain how their systems work, then maybe we shouldn’t trust them either.

Notes:

* This is actually just a guess. Apple could also outsource their key storage to a third-party provider, even though this would be dumb.

** A big caveat here is that some iCloud backup systems use convergent encryption, also known as “message locked encryption”. The idea in these systems is that file encryption keys are derived by hashing the file itself. Even if a cloud storage provider does not possess encryption keys, it might be able to test if a user has a copy of a specific file. This could be problematic. However, it’s not really clear from Apple’s documentation if this attack is feasible. (Thanks to RPW for pointing this out.)

tangle with U.S. over data access

tangle with U.S. over data access

Since that piece was intended for general audiences I mostly avoided technical detail. But since some folks (and apparently the

Since that piece was intended for general audiences I mostly avoided technical detail. But since some folks (and apparently the